数据率失真理论(Rate distortion theory)或稱信息率-失真理論(information rate-distortion theory)是信息论的主要分支,其的基本问题可以归结如下:对于一个给定的信源(source, input signal)分布与失真度量,在特定的码率下能达到的最小期望失真;或者为了满足一定的失真限制,可允許的最大码率為何,D 定義為失真的符號。

要完全避免失真幾乎不可能。處理信號時必須允許有限度的失真﹐可減小所必需的信息率。1959年﹐Claude Shannon 首先發表《逼真度準則下的離散信源編碼定理》一文,提出了率失真函數的概念。

失真函數[编辑]

失真函數能量化輸入與輸出的差異,以便進行數學分析。令輸入信號為 ,輸出信號為

,輸出信號為 ,定義失真函數為

,定義失真函數為 ,失真函數可以有多種定義,其與對應域為非負實數:

,失真函數可以有多種定義,其與對應域為非負實數:

。

。

漢明失真[编辑]

漢明失真函數能描述錯誤率,定義為:

,

,

對漢明失真函數取期望值即為傳輸錯誤率。

平方誤差失真[编辑]

最常用於量測連續字符傳輸的失真,定義為:

,

,

平方誤差失真函數不適用於語音或影像方面,因為人類感官對於語音或影像的平方誤差失真並不敏感。

率失真函數[编辑]

下列是率與失真(rate and distortion)的最小化關係函數:

這裡 QY | X(y | x), 有時被稱為一個測試頻道 (test channel), 係一種條件機率之機率密度函數 (PDF),其中頻道輸出 (compressed signal) Y 相對於來源 (original signal) X, 以及 IQ(Y ; X) 是一種互信息(Mutual Information),在 Y 與 X 之間被定義為

此處的 H(Y) 與 H(Y | X) 是指信宿(output signal) Y 的熵(entropy)以及基於信源(source signal)和信宿(output signal)相關的條件熵(conditional entropy), 分別為:

這一樣來便可推導出率失真的公式, 相關表示如下:

![{\displaystyle \inf _{Q_{Y|X}(y|x)}E[D_{Q}[X,Y]]{\mbox{subject to}}\ I_{Q}(Y;X)\leq R.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/81c0d76dc68b9c8f50df630e3c6a6de7a42621d8)

這兩個公式之間互為可逆推。

無記憶(獨立)高斯訊號來源[编辑]

如果我們假設 PX(x) 服从正态分布且方差为σ2, 並且假設 X 是連續时间独立訊號(或等同於來源無記憶或訊號不相關),我們可以發現下列的率失真公式的「公式解」(analytical expression):

[1]

[1]

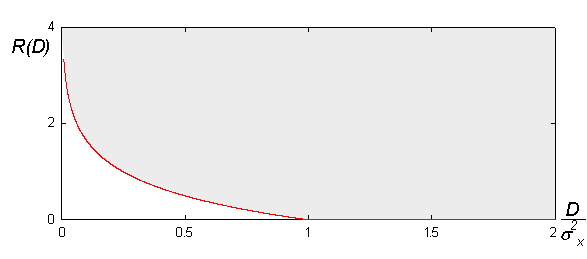

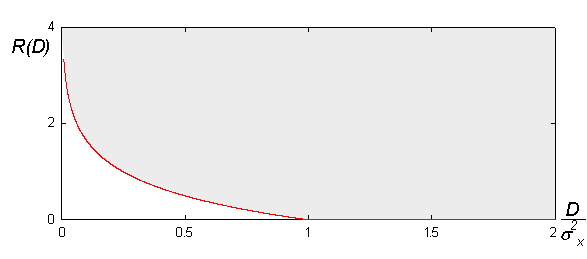

下圖是本公式的幾何面貌:

率失真理論告訴我們“沒有壓縮系統存在於灰色區塊之外”。可以說越是接近紅色邊界,執行效率越好。一般而言,想要接近邊界就必須透過增加碼塊(coding block)的長度參數。然而,塊長度(blocklengths)的取得則來自率失真公式的量化(quantizers)有關。[1]

這樣的率失真理論(rate–distortion function)僅適用於高斯無記憶信源(Gaussian memoryless sources)。

二元信號源[编辑]

伯努利信號源 ,

, ,以漢明失真描述的率失真函數為:

,以漢明失真描述的率失真函數為:

平行高斯信號源[编辑]

平行高斯信號源的率失真函數為一經典的反注水算法(Reverse water-filling algorithm),我們可以找出一閾值 ,只有方差大於

,只有方差大於 的信號源才有必要配置位元來描述,其他信號源則可直接傳送與接收,不會超過最大可容許的失真範圍。

的信號源才有必要配置位元來描述,其他信號源則可直接傳送與接收,不會超過最大可容許的失真範圍。

我們可以使用平方誤差失真函數,計算平行高斯信號源的率失真函數。注意,此處信號源不一定同分佈:

且

且 ,此時率失真函數為,

,此時率失真函數為,

其中,

且 必須滿足限制:

必須滿足限制:

。

。

- ^ 1.0 1.1 Thomas M. Cover, Joy A. Thomas. Elements of Information Theory. John Wiley & Sons, New York. 2006.

![{\displaystyle \inf _{Q_{Y|X}(y|x)}E[D_{Q}[X,Y]]{\mbox{subject to}}\ I_{Q}(Y;X)\leq R.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/81c0d76dc68b9c8f50df630e3c6a6de7a42621d8)